X tests AI labeling to combat the rise of generated content

The app is working on a process that would require users to label posts created using artificial intelligence tools, according to an app researcher.

X is working to combat the rise of content generated by artificial intelligence in the app, and a key step in that process is effective artificial intelligence content labelling, and ensuring that users are aware of AI-generated posts in-stream.

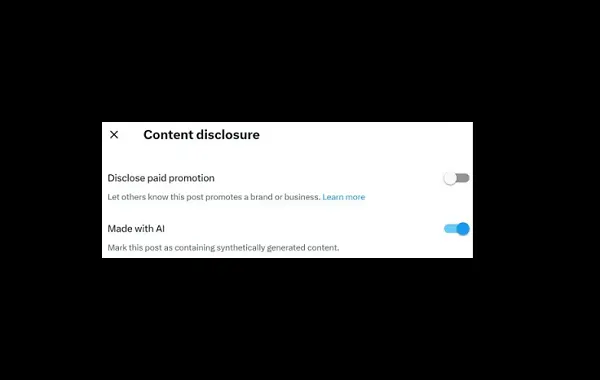

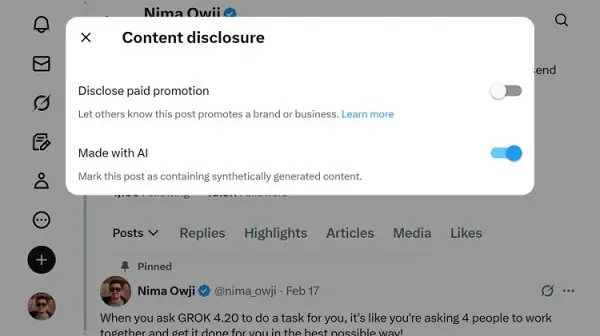

On that front, X is working on a new label for AI-generated content, which users will seemingly soon be required to activate on posts that include content that’s been generated by AI tools.

As shown in this image, posted by app researcher Nima Owji on X, the platform is working on a new post-level toggle that will mark a post to denote that it contains “synthetically generated content.”

X already adds watermarks to images and videos that have been generated by its proprietary Grok chatbot, in order to clarify that the content is AI-generated. But there’s no requirement, at this stage, to include this disclosure within X posts. And with more and more developers looking to flood X with AI-generated content, X’s head of product Nikita Bier has noted that this is a significant concern, which seems to point to the development of this new AI disclosure toggle.

X hasn’t shared any further info on the toggle as yet, since the button is still in the development stage.

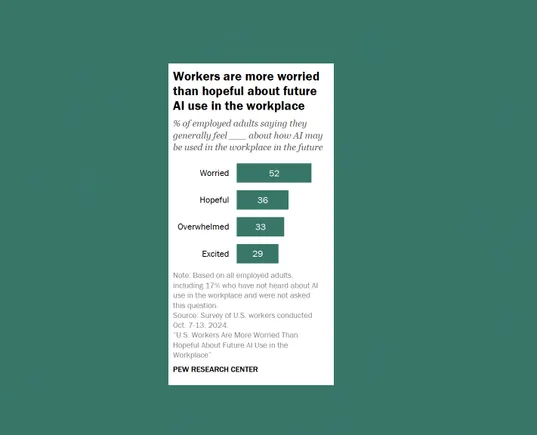

The need for broader AI disclosure is becoming a bigger concern as more AI material is misleading and confusing users, with fake depictions of everything from terrorist attacks to naked images of celebrities, to U.S. President Donald Trump playing ice hockey (which President Trump himself posted to celebrate the U.S. victory over Canada at the recent Winter Olympics).

And while most of these depictions are obviously fake, it may not be so obvious to everyone. With AI depictions becoming increasingly lifelike, the chances of these depictions being believed and adopted as fact by audiences are increasing.

Which is why there is a need for effective AI content labeling on all apps. X is essentially working toward what will soon become an industry standard for disclosure, ensuring more transparency in online interactions.

The question then is whether putting the onus on users to add this toggle will be an effective approach. There is also a risk that automated profiles will continue to post until they get banned, only to start new accounts and continue to share fake content.

FrankLin

FrankLin

_1.png)

.jpg&h=630&w=1200&q=100&v=1be2781027&c=1)