How we Operate as an AI-first Company

This is part three of a three-part series on how HubSpot transformed with AI. Part one covers how we build with AI. Part two covers how we grow with Agent-first GTM.

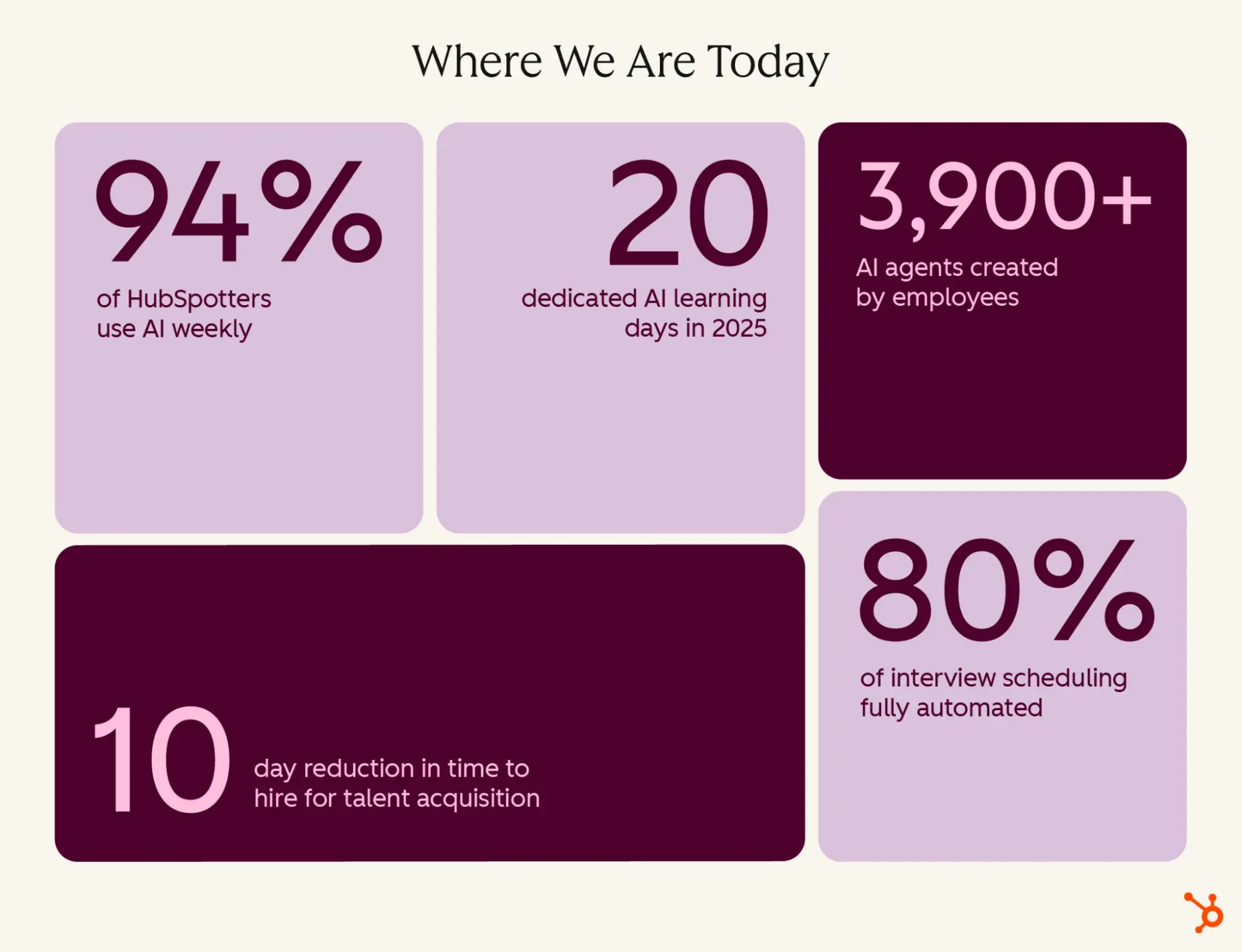

This is part three of a three-part series on how HubSpot transformed with AI. Part one covers how we build with AI. Part two covers how we grow with Agent-first GTM. Building the right engineering platform and rebuilding your go-to-market motion are meaningless if the organization running them isn’t ready. That’s the part most transformation playbooks skip. It’s also the part that determines whether any of it sticks. We didn’t skip it; we doubled down. As a result, 94% of HubSpotters use AI weekly, employees have built over 3,900 AI agents, and our talent profile looks fundamentally different than it did three years ago. This is our playbook for HubSpot’s organizational transformation that made everything else possible. The first stage is about fluency across the entire organization, and it has to start with commitment from the top. Leaders have to model the behavior, share their own experiments, and create the conditions for everyone else to follow, not mandates. We ran three plays to get there, and each is repeatable for any organization: Provide the toolset. Every HubSpotter received enterprise licenses for a core set of AI tools. A central AI strategy team manages vendor relationships, sets security standards, and streamlines adoption of new tools, which eliminates procurement and security bottlenecks that slow transformation at most companies. AI fluency can’t be a competitive advantage you reserve for certain teams. It has to be a baseline expectation for all teams. Shift the mindset. This included fostering a culture of experimentation, in which employees feel empowered to try and to embrace new ways of working. We updated our company values to encourage this perspective, adding ‘be bold, learn fast’ as a core value. Employees share use cases and experiments in dedicated chat channels. Leaders participate alongside their teams, often getting reverse-mentored by people further along in their experimentation, and executives share their own learnings in weekly updates. We also changed our organizational clock speed, moving from annual planning cycles to six-week sprints to keep pace with the technology. To track our progress, we also set a clear, company-wide usage goal: 80% weekly active AI usage by the end of 2025. Then we tracked it openly — by team, by tool, by use case — and made the data visible to everyone. Transparency drove accountability in both directions: teams that were behind had a clear signal, and teams that were ahead became models for others. We want to be clear about why we tracked usage rather than outcomes at this stage. Stage 1 was about building AI fluency. You can’t measure outcome improvement from tools people aren’t using yet. Usage was a leading indicator, not the destination. This wasn’t tokenmaxxing; it was a necessary step on the way to outcome-maxxing in Stage 2. Build the skillset. We carved out protected time for learning. This included hackathons and 20 company-wide AI learning days in 2025. AI was woven into onboarding from day one and into ongoing manager development. The goal was simple: shift the question from “should I use AI for this?” to “how do I use AI better?” The outcome of Stage 1 was a new talent profile. By the end of this stage, we had an organization that was becoming AI-fluent, with 94% of HubSpotters using AI weekly, with over 3,900 AI agents created by employees to improve their own work. When employees each use AI in different ways for different use cases, you get individual productivity but not business outcomes. To achieve team-level transformation, you need clear priorities with real accountability behind them. To start, we plotted teams against two dimensions: That analysis produced three categories for us: Pace setters, or teams that were already moving fast. We don’t want to slow these teams down; we want to support them. Near-in wins, or teams that have obvious automation opportunities but haven’t acted. The bottleneck for these is almost always leadership attention, not tooling. And lastly, Big bets. These are the teams with highest potential but the most dependencies. They need dedicated investment in data, systems, and change management. Here’s where our teams fell, each requiring a different playbook: Pace setters: Engineering, Support, and Marketing had already seen major productivity and efficiency gains through proven AI use cases, leadership sponsorship, and measurement. They needed minimal support and continued their momentum through AI fluency investments. Marketing is the clearest example. The team reimagined workflows across the board: AI-powered email personalization drove an 82% improvement in email conversions, an AI chatbot now handles over 82% of website inquiries and generated 10,000+ sales meetings per quarter by Q4 2025. A video ad production test delivered AI-generated spots at $300–$3,000 versus $300K–$500K with traditional production, and AI-assisted blog production cut writer hours per article by 60%. Near-in wins: Recruiting and Operations had clear automation opportunities that could be unlocked with the right tools. The key lever was leadership attention: “gemba walks,” getting into the work alongside teams to identify exactly where AI could replace or augment specific tasks, and driving adoption hands-on rather than from a distance. An example of this is Talent Acquisition. By embedding AI directly into the hiring funnel, we saw a 10-day reduction in time to hire and a 30% reduction in application review time. We fully automated 80% of interview scheduling tasks, resulting in a 90% increase in scheduling volume with no additional headcount. The share of sourced hires from past candidates grew from 8% to 18% in the first 90 days, a direct result of AI resurfacing talent that would have otherwise been invisible. Big bets: Sales, Customer Success, and Product has the highest potential but needed significant investment in data, systems, and change management. These teams received dedicated AI pods, cross-functional teams of functional experts, data scientists, and ops engineers focused on reimagining specific workflows through rapid experimentation and iteration. The deeper lesson of Stage 2 is that not every team needs the same support. The maturity and readiness analysis is what tells you where to push, where to support, and where to invest. Without it, you end up applying the same approach everywhere and wondering why only some of it works. We are early in Stage 3. But the direction is clear, and it will be the most important stage of all. Stages 1 and 2 solved for individual and team productivity. Stage 3 is about building institutional AI. The distinction matters. Making every employee 10x more efficient doesn’t make a company 10x more productive, unless the institution itself is redesigned around new AI capabilities. The foundation of Stage 3 is institutional context. It means giving everyone access to the right tools, data, and information, and encoding company processes into agents that can act on them at scale. The difference becomes visible in how work gets done day to day. When an engineer needs context on a codebase, they don’t ask a colleague; they ask HubSpot’s internal coding agent. When a sales manager wants to understand why a deal stalled, they don’t pull a report; they ask our native Guided Selling Assistant. When a new hire needs to understand how HubSpot makes decisions, they don’t wait for onboarding; they ask our internal AI tool. That is what institutional AI looks like in practice: the collective context of the organization, available to everyone, at the moment they need it. Moving to this stage also requires confronting questions that earlier stages don’t. When agents own steps in a workflow end-to-end, governance matters more. Who can see what? What decisions require human sign-off? How do you catch bad outputs before they compound? We’ve had to build for these questions deliberately, establishing clear permissions, audit trails, and escalation paths so that the speed of agents doesn’t outpace our ability to oversee them. We are still on this journey. But we understand what’s at stake. The companies that build institutional AI are the ones that will have an advantage. But to do it, don’t start with AI. Start with the work. Find the workflow that’s slow, expensive, or brittle. Find the team that is most ready. Run the experiment, measure it honestly, then commit to what the data shows. The same principle runs through everything in this series: the tools are just the starting point. Building the foundation – technically, structurally, and culturally – is what allows you to scale. In engineering, that foundation is a platform. In go-to-market, it’s a flywheel. In how you operate, it’s the organization itself. The companies that figure this out won’t just use AI better, they’ll grow better.

Stage 1: Building AI Fluency (2023–2025)

Stage 2: Team-Level Transformation (2025–Present)

Stage 3: Institutional Transformation (2026 and Beyond)

AI transformation starts with a strong foundation

KickT

KickT

.jpg&h=630&w=1200&q=100&v=477daa9eb8&c=1)