TikTok publishes first transparency report on EU hate speech removal

The publication is part of the platform’s compliance work with the EU Digital Services Act under the EU Code of Conduct on Countering Illegal Hate Speech Online.

An article from

The publication is part of the platform’s compliance work with the EU Digital Services Act under the EU Code of Conduct on Countering Illegal Hate Speech Online.

This audio is auto-generated. Please let us know if you have feedback.

TikTok published its first transparency report under the EU Code of Conduct on Countering Illegal Hate Speech Online. The new report provides an overview of TikTok’s work to enforce rules against hate and illegal hate speech.

The updated reporting requirement is a new element of the EU Digital Services Act, and was implemented in order to add another level of transparency and accountability to social platform operations in the region.

At the same time, the report also provides more insight to the public around hate speech detection and removal efforts within social apps.

So how is TikTok doing on this element?

TikTok reported that 88.7% of content flagged by users is reviewed within 24 hours, while 96.3% of the content that TikTok removed for breaking its rules was taken down before a user report.

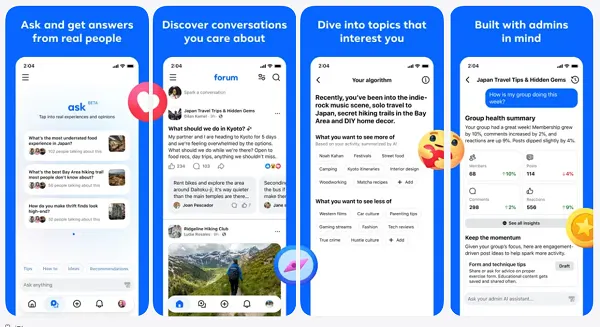

As per TikTok: “In H2 of 2025, we received 56,549 user reports of illegal content related to hate speech, corresponding to 30,128 unique pieces of content across video, live streams, comments, ads, and product listings.” TikTok said the median time to action for these reports was 3.05 hours, and that it used a range of approaches to detect and remove hate speech. That included computer vision models that identify objects which violate policies (such as emblems and logos that are known to be associated with hate groups), audio detection that can detect audio that’s similar to previous violations and text reviews.

TikTok also outlined the various partnerships it has in place to broaden its hate speech detection processes. Those include the International Network Against Cyber Hate, DigiQ and Point de Contact. Last year, TikTok also joined the Global Internet Forum to Counter Terrorism.

TikTok further noted that its enforcement efforts are always evolving in order to detect more instances of hate speech and ensure that its platform is not being used to amplify such content.

Which is an important focus. Social platforms empower connections between billions of people, which also means that social media apps have a responsibility to manage how their platforms are used and what types of activity they incentivize or facilitate.

To some, this is viewed as a free speech issue. This has become a bigger point of contention in recent years, with Elon Musk’s purchase and reformation of the app formally known as Twitter turning this into an ideological argument. Musk and others have framed this as a method of political control by left-leaning entities.

Most evidence, however, suggests that social media moderation decisions have generally been made with a view to reducing the capacity for their platforms to be used as a bullhorn for dangerous extremists. Without moderation, some argue, these extremists would be able to grow their followings and spread hate and division to a broader audience.

There is always a balance required in moderating approaches, however. Reports such as this one, combined with platform definitions of hate speech, provide more insight into how social apps are working to combat dangerous extremism.

UsenB

UsenB

.jpg&h=630&w=1200&q=100&v=477daa9eb8&c=1)